Hello!

I’m working on a project where I’m scraping data from a website and adding that data to an Excel sheet. Each scrape from the website has multiple elements that I want to put into the Excel sheet. I had no issues when using the Write Excel file activity but I have my bot running a loop so it continues to scrape data from multiple pages. I tried to use Append row but that wasn’t working as it’s not just one row of information at a time. Each loop the data is successfully being scraped but it’s overwriting the previous data in that Excel sheet.

If there’s a way to update the Excel sheet with bigger portions of data and just keep adding to it, please let me know!!

Thank you very much!

2 Likes

Hey @poojargaddam I would suggest having the bot create a blank file in the beginning of the work flow and then append to that existing file.

Hello @poojargaddam, I just wanted to follow up with you on this, I am super interested in knowing if you were able to find your solution here.

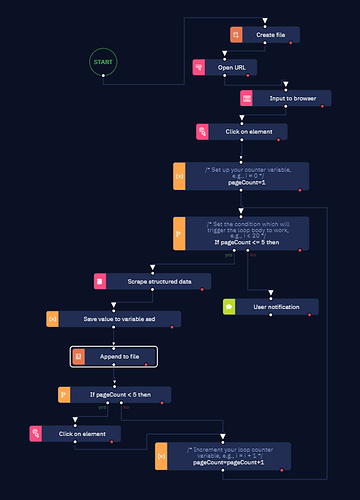

Hi. I’m having the same problem. I want to save the results of a google search in an excel file. I want to save the results from 5 different pages and I’m using the Scrap structured Data activity. I’ve managed to make the bot save the data to an excel sheet and go to the next page, but it’s overwriting the data saved in the previous loop.

I’ve tried your solution, as shown in the following snapshot. My computer screen is too small, sorry about that. I hope it’s possible to see everything.

But it doesn’t really work for me. The bot runs without errors, but when I try to open the excel file, it says Excel can’t open the file because its format or extension isn’t valid and it might be corrupted.

I thought maybe using Write Excel range instead of Append to file might work, but I don’t know how to tell the bot to put the data in the next blank line, since I have to set the range. Any tips on how to solve this issue? Thank you.

1 Like

I did a robot named “Excel line count” to receive a directory path and return to me the number of used rows. In this bot I return that the next blank line is a number, and then I’ll use that in my loop instead of starting on line 1 or 2.

1 Like

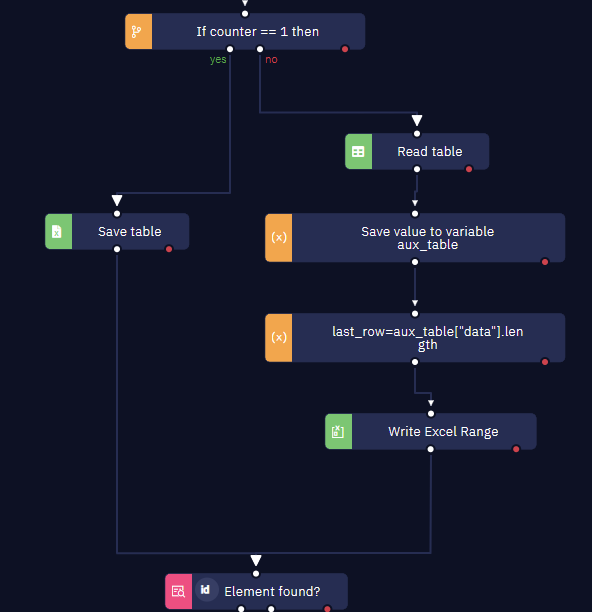

@poojargaddam and @cris-dsc, this is how I solved it:

For the first scraping capture I use “Save table” to create the spreadsheet. Then I use “Read table”, I calculate the last line and finally use “Write Excel Range” to add the new scraping capture. To specify the value in the “Write Excel Range” I’m using SSD_table["data"] in the “Calculate a value” field.

4 Likes

@dm.ferreyra I managed to solve it by using two loops (a for each row inside a do... while) with an Append row to excel file activity, but I think I like your solution better. I’ll try it out! Thank you for taking the time to help.

EDIT: I’ve tried your solution and it works. But after adapting my original algorithm, it seems to run faster, with an average difference of 3s. I figured the Read Table activity in your solution is what is causing it to take longer.

2 Likes